基于Flink的当当网畅销图书榜单数据分析及可视化

舟率率 11/7/2025 pythonflask

# 项目概况

# 数据类型

当当网畅销图书排行榜单数据

# 开发环境

centos7

# 软件版本

python3.8.18、hadoop3.2.0、flink1.14.6、mysql5.7.38、jdk8

# 开发语言

python、Java

# 开发流程

数据清洗(python)->数据上传(hdfs)->数据分析(flink)->数据存储(mysql)->后端(flask)->前端(html+js+css)

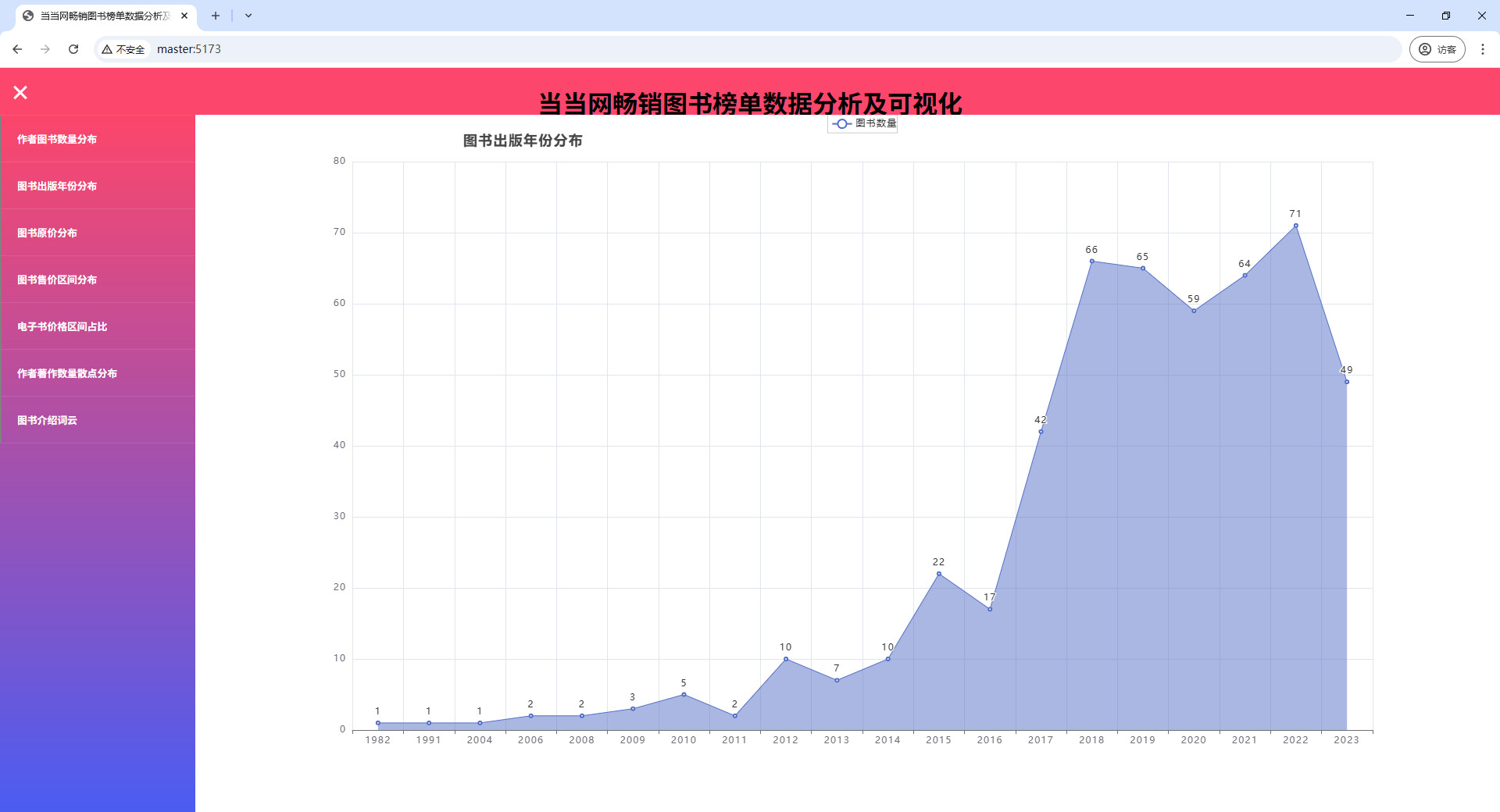

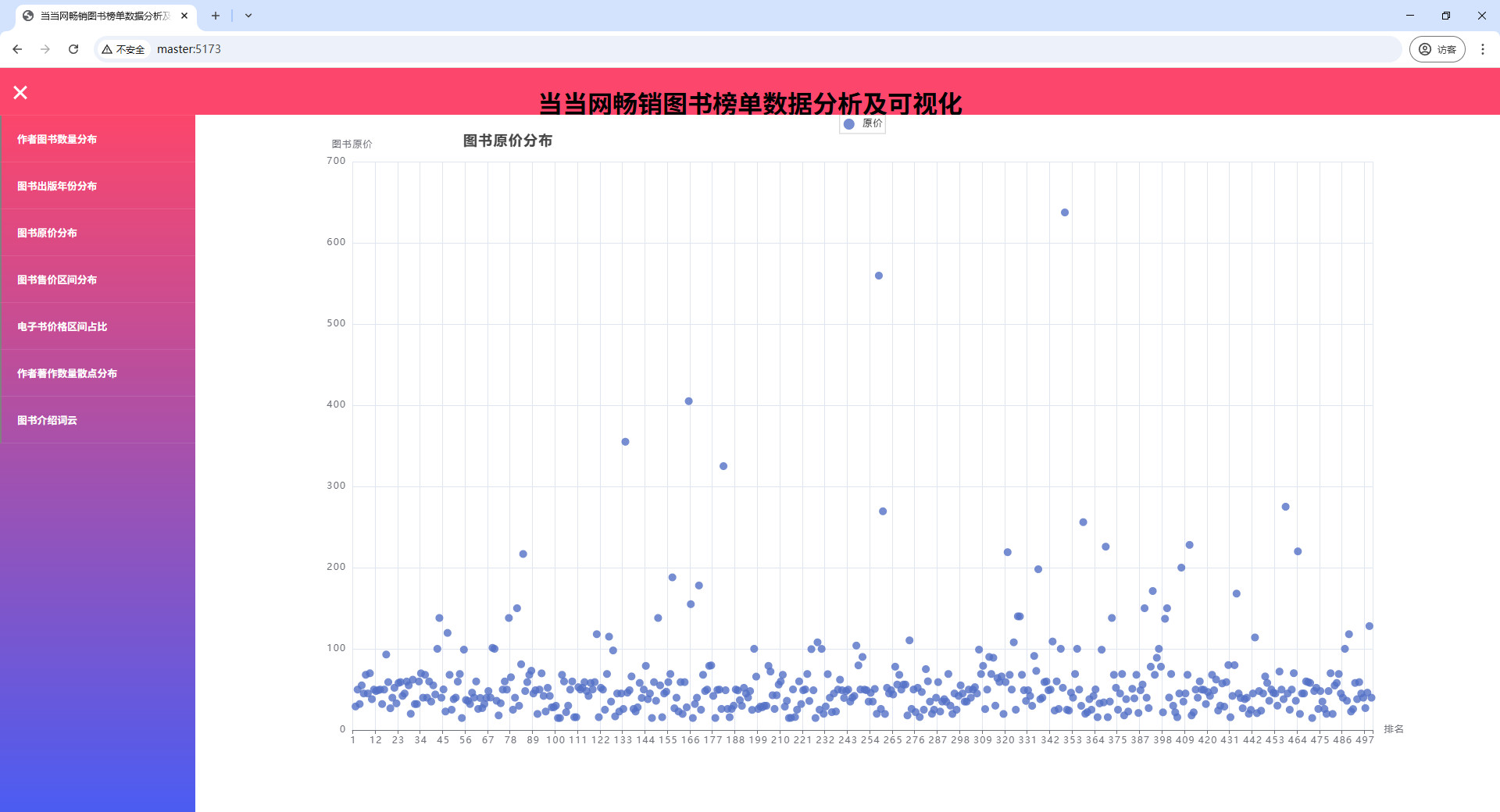

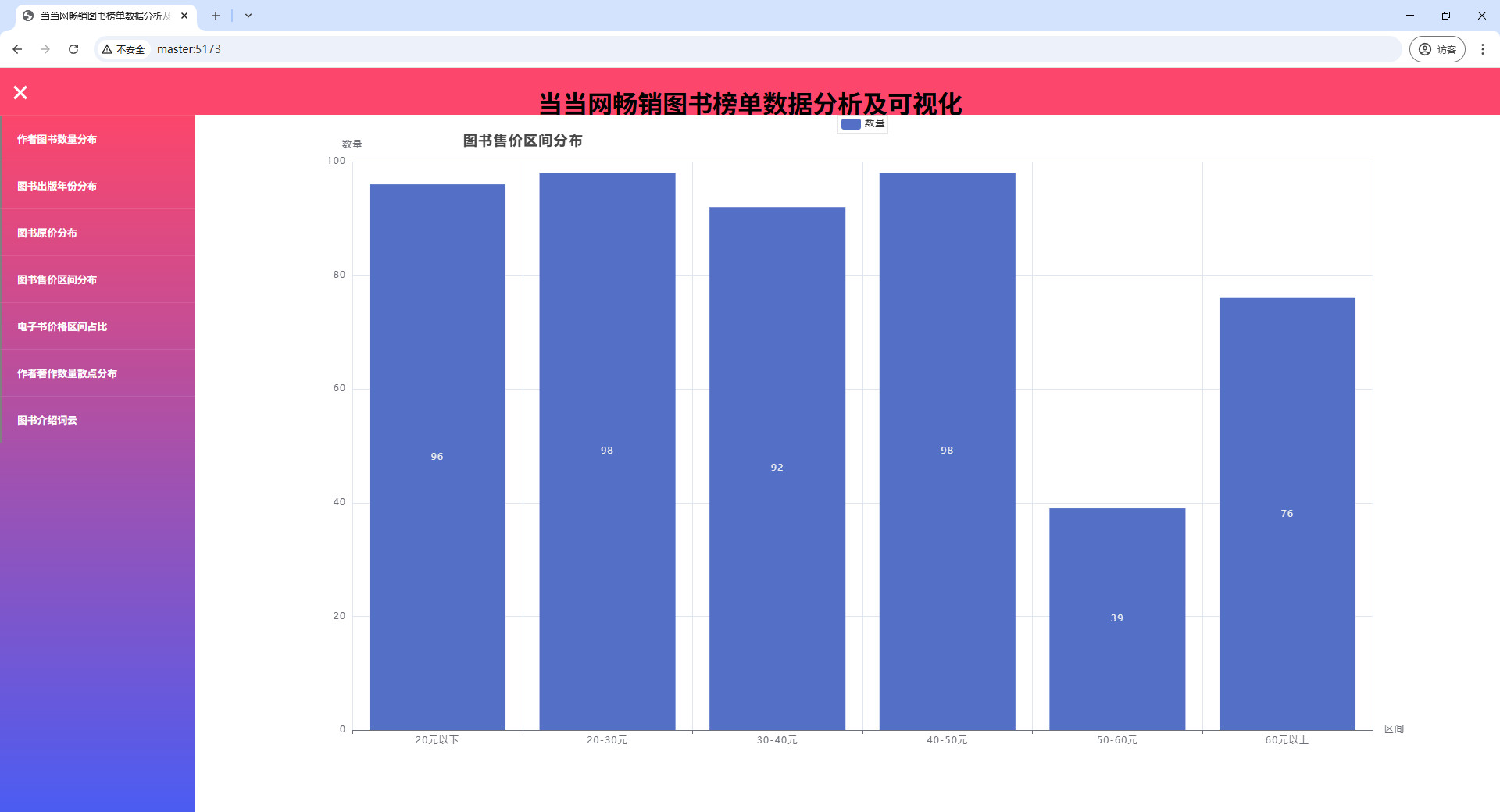

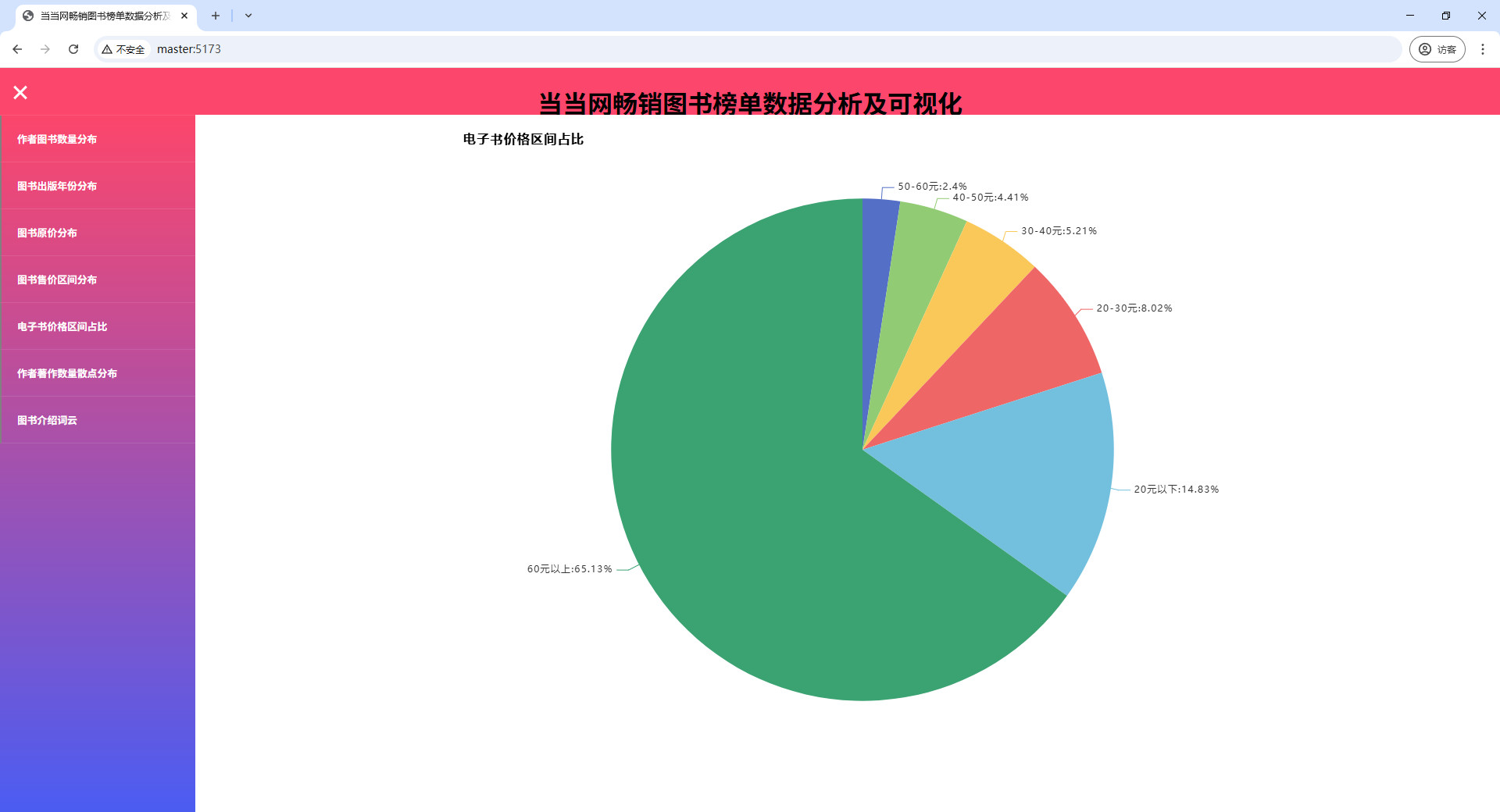

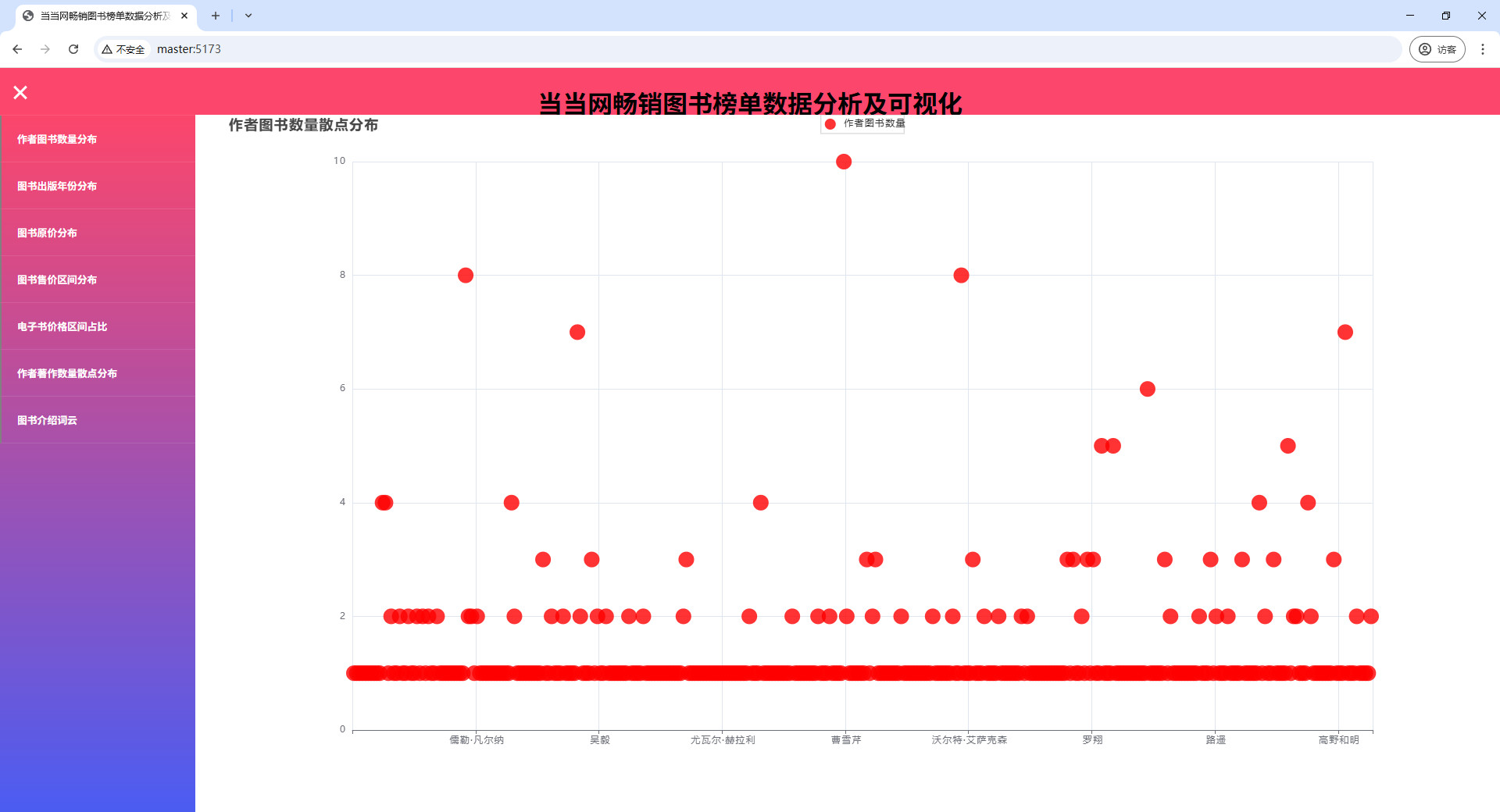

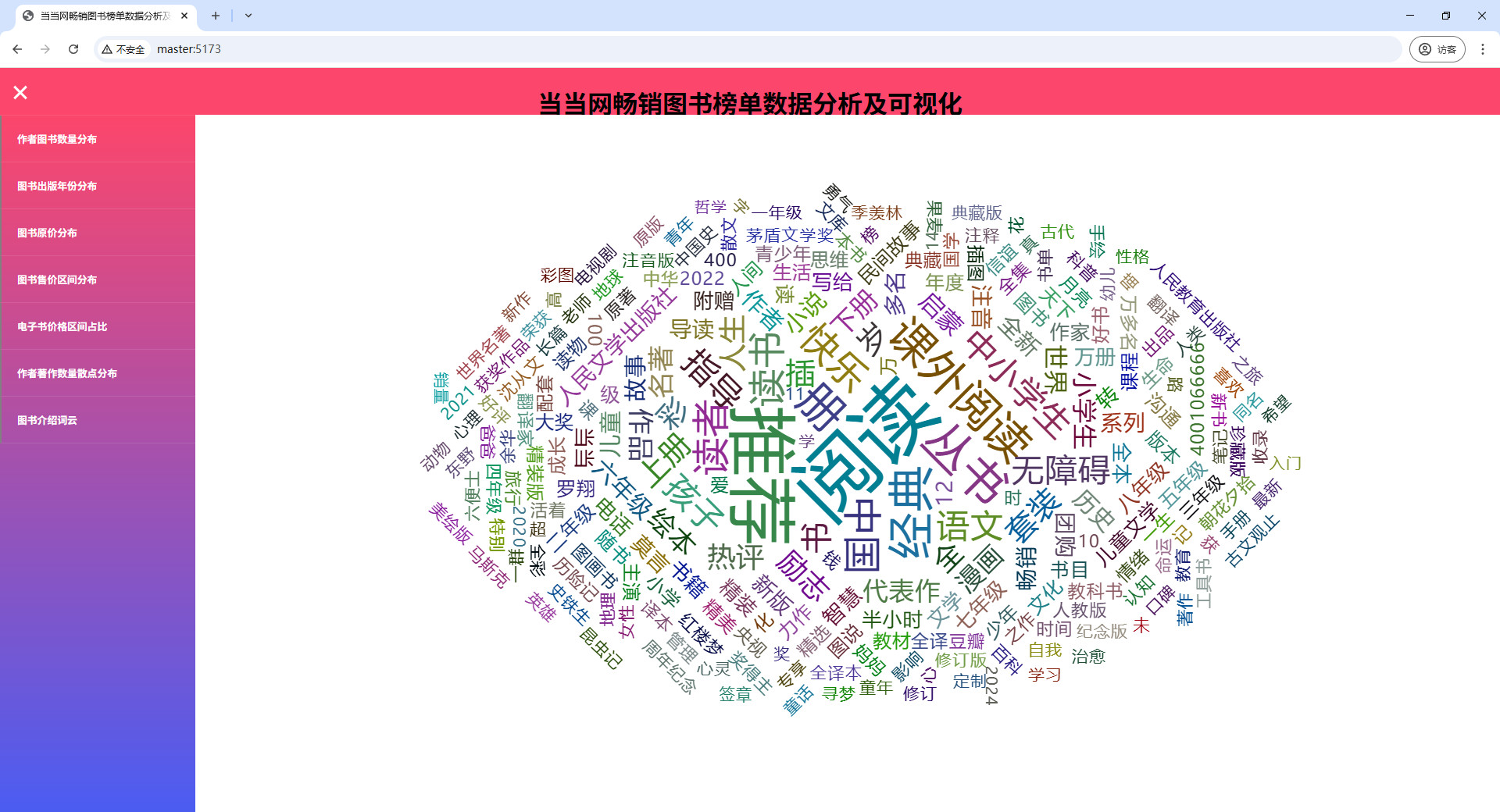

# 可视化图表

# 操作步骤

# python安装包

pip3 install pandas==2.0.3 -i https://pypi.tuna.tsinghua.edu.cn/simple/

pip3 install pyecharts==2.0.4 -i https://pypi.tuna.tsinghua.edu.cn/simple/

pip3 install flask==3.0.0 -i https://pypi.tuna.tsinghua.edu.cn/simple/

pip3 install flask-cors==4.0.1 -i https://pypi.tuna.tsinghua.edu.cn/simple/

pip3 install pymysql==1.1.0 -i https://pypi.tuna.tsinghua.edu.cn/simple/

pip3 install jieba==0.42.1 -i https://pypi.tuna.tsinghua.edu.cn/simple/

1

2

3

4

5

6

7

8

2

3

4

5

6

7

8

# 启动MySQL

# 查看mysql是否启动 启动命令: systemctl start mysqld.service

systemctl status mysqld.service

# 进入mysql终端

mysql -uroot -p123456

1

2

3

4

5

6

2

3

4

5

6

# 启动Hadoop

# 离开安全模式: hdfs dfsadmin -safemode leave

# 启动hadoop

bash /export/software/hadoop-3.2.0/sbin/start-hadoop.sh

1

2

3

4

5

2

3

4

5

# 启动flink

# 启动

/export/software/flink-1.14.6/bin/start-cluster.sh

# 关闭

/export/software/flink-1.14.6/bin/stop-cluster.sh

1

2

3

4

5

6

7

2

3

4

5

6

7

# 数据清洗

mkdir -p /data/jobs/project/output/

cd /data/jobs/project/

# 上传 data/当当畅销榜图书数据.csv

# 上传 data_clean.py

mv 当当畅销榜图书数据.csv output/

python3 /data/jobs/project/data_clean.py

1

2

3

4

5

6

7

8

9

10

2

3

4

5

6

7

8

9

10

# 数据上传

cd /data/jobs/project/

hdfs dfs -mkdir -p /data/origin/dangdang_book/

hdfs dfs -rm -r /data/origin/dangdang_book/*

hdfs dfs -put -f output/result.csv /data/origin/dangdang_book/

hdfs dfs -ls /data/origin/dangdang_book/

1

2

3

4

5

6

7

2

3

4

5

6

7

# MySQL建表

cd /data/jobs/project/

# 请确认mysql服务已经启动了

# 快速执行.sql文件内的sql语句

mysql -uroot -p123456 < mysql.sql

1

2

3

4

5

6

7

2

3

4

5

6

7

# 数据分析

# 对 "project-dangdang-book-analysis" 目录下的项目 "flink-job" 进行打包

# 打包命令: mvn clean package -DskipTests

# 打包完成后,上传 "flink-job/target/" 目录下的 "flink-job.jar" 文件 到 "/data/jobs/project/" 目录

cd /data/jobs/project/

flink run -c org.example.Main flink-job.jar

# 若要在本地运行,需要删除以下内容:

# <exclude>org.apache.flink:flink-java</exclude>

# <exclude>org.apache.flink:flink-core</exclude>

# <exclude>org.apache.flink:flink-clients_${scala.version}</exclude>

# <exclude>org.apache.flink:flink-table-planner_${scala.version}</exclude>

# <exclude>org.apache.flink:flink-table-api-java-bridge_${scala.version}</exclude>

# <exclude>org.apache.flink:flink-table-common</exclude>

# <exclude>org.codehaus.janino:janino</exclude>

# <exclude>org.codehaus.janino:commons-compiler</exclude>

# <exclude>org.codehaus.commons-compiler:commons-compiler</exclude>

# <scope>${scope.type.provided}</scope>

# 若要在本地运行,需要修改以下内容:

# 改为本地文件路径

# hdfs://master:9000/data/origin/dangdang_book/

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

# 启动可视化

mkdir -p /data/jobs/project/myapp/

cd /data/jobs/project/myapp/

# 将"可视化"目录下的文件上传到myapp目录下

# windows本地运行 python3 app.py

python3 app.py pro

1

2

3

4

5

6

7

8

9

2

3

4

5

6

7

8

9